Abstract

This is the third of three attempts to justify the use of 158-year-old credit ratings in the credit portfolio management process. In part 1 of this series, we showed that the use of historical ratings-based default rates severely overstates credit risk among all rated firms world-wide for the first 4 years. For years 5 through 10, the use of historical default rates dramatically understates credit risk. In part 2 of the series, we showed that trying to use ratings as a proxy for modern big data-based default probabilities failed as well, since the 20 ratings grades explained less than 38% of the variation in Kamakura Risk Information Services default probabilities at every maturity tested. In this installment, we change the focus to traded bond spreads in the hope that ratings provide higher explanatory power for spreads and bond valuation. Sadly, we again conclude that analyzing credit portfolio risk and valuation can be done more accurately without ratings than with ratings.

Introduction

One of the motivations for this series on credit portfolio management is a frequent question from both institutional and individual investors: “Why should I use default probabilities to management credit risk? Aren’t credit ratings enough?” The answer is clearly no if the objective is to predict default. From an academic perspective, Hilscher and Wilson [2016] show that modern “reduced form” default probabilities are much more accurate in predicting default than credit ratings, invented in 1860 and largely unchanged since then. In part 1 of this series, we showed that the common use of historical ratings-based default rates dramatically overstated credit risk for all rated firms world-wide for maturities out to 4 years. For maturities of 5 years and longer, the use of historical default rates vastly understated the default risk among the rated universe of firms. The following graphic shows the degree of credit risk underestimate at a 10-year horizon as of June 22:

In part 2 of this series, we tried to “fix” ratings by tying them to modern big-data default probabilities from KRIS on a forward-looking basis. We found that at every maturity tested, 20 ratings grades explained less than 38% of the variation in default probabilities at every maturity tested. Here is a summary of the accuracy results for the same calculation as of Friday, June 22:

Why have ratings performed so poorly in the first two installments of this series? The answer is that the “analytical business model” of ratings, unchanged since 1860, is fundamentally flawed. The design decisions made in 1860 have not been changed in the 158-year history of credit ratings. The key design decisions can be summarized as follows:

1. 20 ratings grades are enough to explore the credit risk of public firms, no matter how many public firms there are.

2. There is no need for a term structure of credit risk.

3. There is no need to assign an explicit default probability for any time horizon to a ratings grade. Users can do that themselves if they want to do so.

4. There is no need to assign an explicit recovery rate to any ratings grade. Again, users can do that themselves if they want to. 5. There is no need to update ratings frequently. The author notes that a recent study showed that the mean time since the prior ratings change was 815 days among all rated firms world-wide (see van Deventer, July 8, 2013).

How can rating agencies survive with no change in credit technology over the last 158 years? A Managing Director for one of the two legacy rating agencies summarized the answer nicely in a Chicago credit conference in the fall of 2008 as the credit crisis unfolded: “Our clients prefer stability over accuracy.”

In the current environment, such a statement is not conducive to a long career in credit risk management. The chart below from KRIS shows that the expected 10-year cumulative default risk of all rated firms world-wide is now at 12.93%, just below the 13.33% level that prevailed in September 2008, the month that Lehman, FNMA, FHLMC and AIG all failed.

There is one last way in which we can try to save the ratings concept: change the focus from default prediction to bond valuation and traded bond credit spreads. Like our first two attempts in part 1 and part 2 of the series, this strategy to save the credit ratings concept is also a failed strategy. We explain why in what follows.

1. Should we predict spreads to predict bond value or predict bond value first and derive spreads from value?

For most of the last 50 years, fixed income analysts including the author have focused on explaining movements in credit spreads. Step 2, given the prediction of credit spreads, is to predict bond values from spreads.

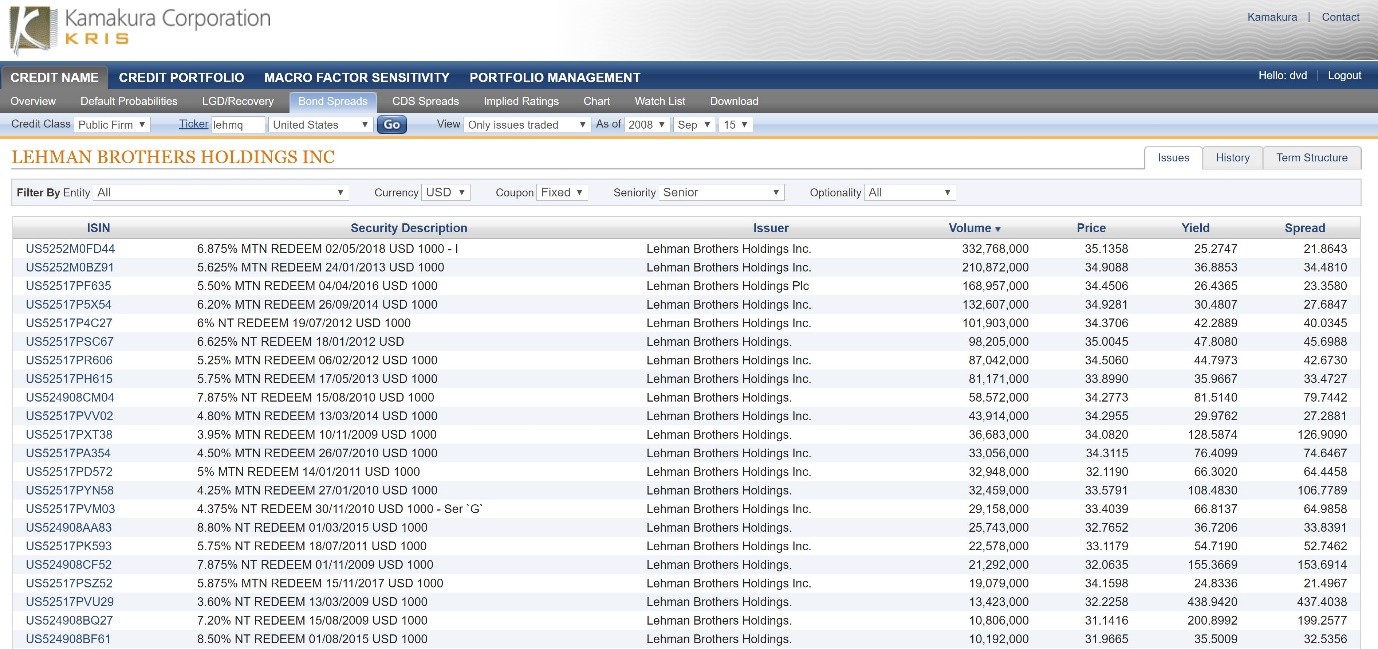

The problem with this strategy, as pointed out by Jarrow and van Deventer [2018], is that it simply doesn’t work. This can be illustrated simply with data from the bond market on September 15, 2008 for senior debt from Lehman Brothers Inc. Readers will recall that Lehman CEO Dick Fuld announced on Sunday September 14, 2008 that Lehman would file for bankruptcy the following day, so this data point is one of the few where every rational fixed income analyst would agree on the default probability for any public firm. In this case, for Lehman Brothers, it was 100%. The chart below for KRIS shows the traded bond credit spreads for Lehman on that day:

Traded bond spreads for Lehman Brothers ranged from 9% to over 400%! Why? Because the credit spread calculation assumes that all payments of coupons and principle will be paid with certainty on the scheduled payment date, an assumption that is false for any issuer with a default probability greater than 0. A look at Lehman traded senior bond prices shows a much more reassuring story when the focus is on price, not credit spreads:

The average traded price for all Lehman senior debt issues clustered near the average price of 33.80 with a standard deviation of 1.60. The moral of the story is a simple one: it is much easier to model bond prices and derive credit spreads from them than doing the opposite: modeling spreads to derive price. The reason is that the credit spread calculation itself is riddled with model error which introduces noise into credit analysis.

What does that mean for credit ratings?

We answer the question in two ways. First, we assess the accuracy of using credit ratings to model credit spreads in the traditional way in Section 2. Second, we assess the accuracy of using credit spreads to model bond net present values (price plus accrued interest) in Section 3.

2. Model Validation for a Ratings-Based Prediction of Credit Spreads

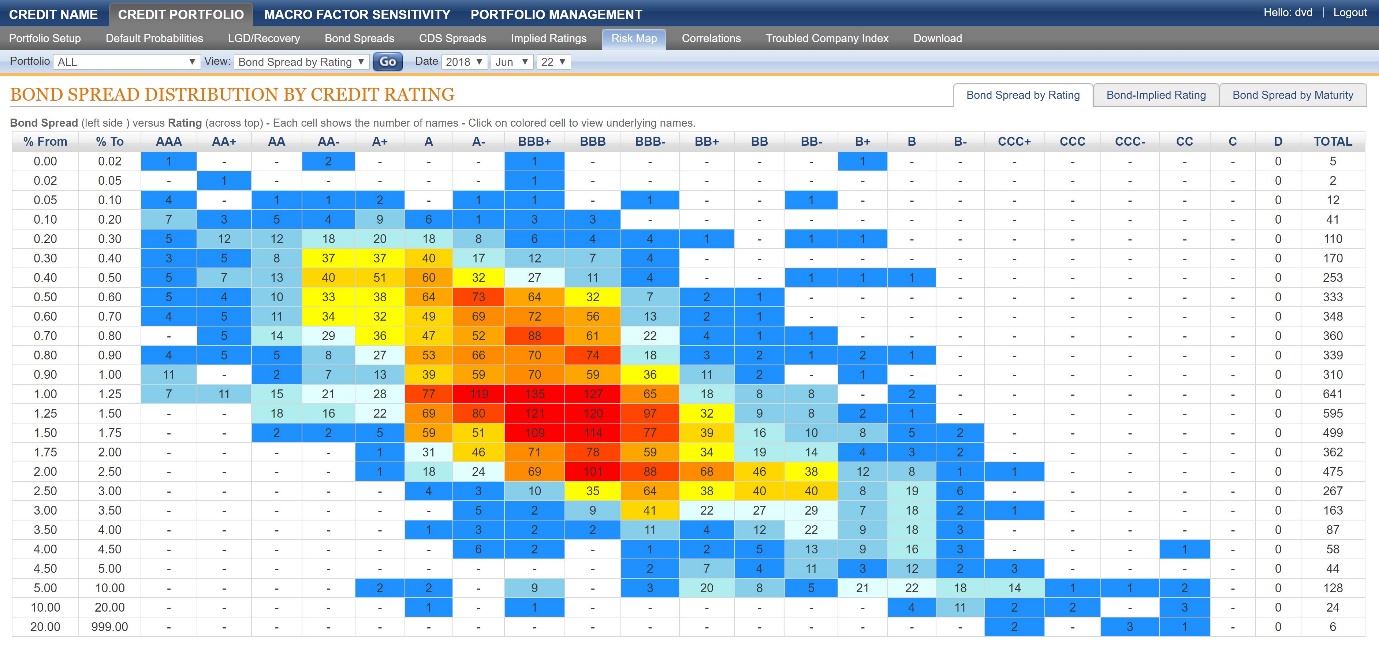

Each day the Kamakura Risk Information Services modeling team conducts an analysis of the validity of the use of all primary default models, including credit ratings. This graphic from KRIS shows that the correlation of credit ratings and traded bond spreads in the U.S. corporate bond market on Friday June 22 is quite low:

Since visual inspection is not enough, we seek to quantify the explanatory power of 20 ratings grades in explaining the traded credit spreads of all senior fixed rate bonds reported by the TRACE system on June 22. To avoid predicting negative traded credit spreads, we model the log of the credit spread using dummy variables for each of the 20 ratings grades. More formally, we used generalized linear methods and a log link function to fit credit spreads. The results are shown in this graph:

On June 22, ratings explained 53% of the variation in traded credit spreads for 4,579 traded bonds in the U.S. corporate market. The standard deviation of the error in modeling spreads was 125 basis points, a huge “miss.” Given the Lehman data above, this result should come as no surprise. We now try to model bond prices instead in the hope of a better result.

3. Model Validation for a Ratings-Based Prediction of Credit Spreads

We use the following analysis to maximize the predictive capabilities of ratings “as is,” i.e. we don’t try to fix obvious flaws in ratings like a lack of maturity structure. On each business day starting on September 1, 2017, for each of the 20 ratings grades we solve for the single credit spread for each of the 20 ratings grades which minimizes the sum of squared pricing errors (on a trade volume-weighted basis) for that rating grade. For example, for all bonds of all BBB+ rated issuers on September 1, we use non-linear least squares to find the constant credit spread CBBB+ that minimizes the pricing errors for bonds with a BBB+ rating. We do the same for bonds with all other ratings. We measure the standard error in predicting bond prices for each day for all ratings.

As a challenger model, we calculate the goodness of fit to bond prices using KRIS default probabilities augmented with a liquidity factor and recovery rate as explained in Jarrow and van Deventer [2018]. The chart below shows the standard deviation of ratings-based pricing errors (red dots) versus the KRIS default probability-based pricing errors (in blue):

On every single business day from September 1, 2017 through June 22, 2018, the standard deviation of pricing errors was smaller using KRIS default probabilities than it was using ratings. In short, the KRIS default probabilities are more accurate in predicting bond prices than ratings on every single day.

A more simplistic question is this: “Bond by bond, day by day, which method is more accurate in predicting bond prices?” The answer is shown in this graphic from Jarrow and van Deventer [2018]:

In short, using KRIS default probabilities to predict bond prices is more accurate than ratings for 89.2% of more than 70,000 observations over this time period. The win rate using credit spreads as a measure is similar.

4. Conclusions

Standard model validation procedures were used to measure the ability of credit ratings to predict bond prices. We focused on prices, not spreads, because of the model risk issues embedded in spreads as revealed by the Lehman Brothers experience. The challenger model was an analysis doing the same calculation using KRIS default probabilities instead of credit ratings. The results are definitive: KRIS default probabilities have a lower standard deviation in fitting traded senior non-call bond prices on every single business day from September 1, 2017 through Friday, June 22, 2018.

Is there any reason to use credit ratings in credit portfolio management instead of modern big data default probabilities? If accuracy is the foremost concern, the answer is no. If stability is more important than accuracy, using the phrase from 2008, there is one more credit model that is even more stable than ratings and that has a history of thousands of years. Since accuracy is less important than stability in this case, we recommend setting all default probabilities equal to Pi = 3.1415926535897932384626433832795028841971693993751058.

References

Hilscher, Jens and Mungo Wilson, “Credit Risk and Credit Ratings: Is One Measure Enough?” Management Science, October 17, 2016.

Jarrow, Robert, David Lando, and Fan Yu, “Default Risk and Diversification: Theory and Applications,” Mathematical Finance, January 2005, pp. 1-26.

Jarrow, Robert and Donald R. van Deventer, “The Ratings Chernobyl,” Kamakura Corporation blog at www.kamakuraco.com, reproduced by the Global Association of Risk Professionals and www.riskcenter.com, March 9, 2009.

Jarrow, Robert and Donald R. van Deventer, “The Valuation of Corporate Bonds,” Kamakura Corporation and Cornell University memorandum, May 1, 2018.

S&P Global Ratings, “Default, Transition, and Recovery: 2016 Annual Global Corporate Default Study And Rating Transitions,” April 13, 2017.

United States Senate Permanent Subcommittee on Investigations, Committee on Homeland Security and Governmental Affairs, “Wall Street and the Financial Crisis: Anatomy of a Financial Collapse,” April 13, 2011.

van Deventer, Donald R. “How Stale are Credit Ratings?,” www.seekingalpha.com, July 8, 2013.

van Deventer, Donald R. “’Point in Time’ versus ‘Through the Cycle’ Credit Ratings: A Distinction without a Difference,” Kamakura Corporation blog at www.kamakuraco.com, May 9, 2009.

van Deventer, Donald R. “A Quantitative Assessment Of Errors From The Use Of Credit Ratings In Credit Portfolio Management, Part 1, Kamakura Corporation blog at www.kamakuraco.com and www.seekingalpha.com, June 17, 2018.